Computational reflection in software process modeling: the SLANG approach

Software quality analysis with the use of computational intelligence

Transcript of Software quality analysis with the use of computational intelligence

Software quality analysis with the use of computational intelligence

Marek Reformata,*, Witold Pedrycza,1, Nicolino J. Pizzib,2

aDepartment of Electrical and Computer Engineering, University of Alberta, Edmonton, Alta, Canada T6G 2V4bNational Research Council, Winnipeg, Man., Canada R3B 1Y6

Abstract

Quality of individual objects composing a software system is one of the important factors that determine quality of this system. Quality of

objects, on the other hand, can be related to a number of attributes, such as extensibility, reusability, clarity and efficiency. These attributes do

not have representations suitable for automatic processing. There is a need to find a way to support quality related activities using data

gathered during quality assurance processes, which involve humans.

This paper proposes an approach, which can be used to support quality assessment of individual objects. The approach exploits techniques

of Computational Intelligence that are treated as a consortium of granular computing, neural networks and evolutionary techniques. In

particular, self-organizing maps and evolutionary-based developed decision trees are used to gain a better insight into the software data and to

support a process of classification of software objects. Genetic classifiers—a novel algorithmic framework—serve as ‘filters’ for software

objects. These classifiers are built on data representing subjective evaluation of software objects done by humans. Using these classifiers, a

system manager can predict quality of software objects and identify low quality objects for review and possible revision. The approach is

applied to analyze an object-oriented visualization-based software system for biomedical data analysis.

q 2003 Elsevier Science B.V. All rights reserved.

Keywords: Software quality assessment; Software measures; Computational intelligence; Self-organizing maps; Decision trees; Data visualization;

Classification; Knowledge extraction

1. Introduction

A number of software maintenance tasks are influenced,

to higher or lesser degree, by quality of software systems. A

lot of effort is put into assuring a high quality of developed

systems. Questions regarding quality arise at different stages

of a software development process. Increasing functional

and nonfunctional requirements of software systems lead to

increased complexity of software. Subsequently, quality

assessment carried out manually by managers becomes

tedious and inefficient. Managers and developers are

looking for methods, techniques and tools that can assist

them in quality related activities at different phases of

software development.

Conversion of quality issues into a representation

suitable for processing as well as different processing

methods are needed to accomplish quality assurance tasks.

The translation of quality topics into software measures

has been identified as one of the ways of dealing with

qualitative problems in a quantitative way [1]. In such

case software measurement data methodology governs

collection, storage and analysis of data [2,3]. Processing

of software data is usually performed using a number of

statistical methods. In the paper, an application of

Computational Intelligence (CI) techniques to perform

analysis tasks is presented.

An approach is being proposed to support a process of

analysis of software objects. CI methods are used to

examine the values of software measures describing soft-

ware objects, and to build classifiers. Based on the values of

different measures a classification process is performed

where a predefined category is assigned to each object. A

system manager or developer can use such a classifier to

assess quality of software objects in order to identify low

quality objects for review and possible revision. These

classifiers can be also used to gain information about other

aspects of software development such as number of

programmers involved in development of a given object,

or ‘signature’ of objects belonging to different software

packages. The classifiers can increase knowledge of

managers and developers about software objects and

0950-5849/03/$ - see front matter q 2003 Elsevier Science B.V. All rights reserved.

doi:10.1016/S0950-5849(03)00012-0

Information and Software Technology 45 (2003) 405–417

www.elsevier.com/locate/infsof

1 þ 1-780-492-3033.2 þ 1-204-983-8842.

* Corresponding author. Tel.: þ1-780-492-2848.

E-mail addresses: [email protected] (M. Reformat), pedrycz@ee.

ualberta.ca (W. Pedrycz), [email protected] (N.J. Pizzi).

development processes. This knowledge can be extracted

from the classifiers in the form of if-then rules.

The paper is organized into six sections. First Section 2

covers a description of CI techniques and their application

to analysis of data. In Section 3 an experimental setting is

described together with a software system being analyzed

and a collected data set. Section 4 proceeds with a visual

examination of data. Data classification problem and

knowledge extraction is described in Section 5. The

conclusions are in Section 6. Appendices A and B serve

as a brief and focused exposition to the algorithms of CI

used in this analysis. Appendix A is a description of self-

organizing maps (SOMs). Appendix B is a concise overview

of decision trees, genetic algorithms (GAs) and genetic

programming (GP). It also contains a description of an

evolutionary-based method that support the development of

the decision trees.

2. Computational intelligence techniques in data analysis

By its very nature, software data are complex to analyze.

There are several main reasons, namely (a) nonlinear

character of relationships existing between data points, (b)

scarcity of data combined with a diversity of additional

factors not conveyed by the dataset itself. This complexity

leads to a need for analysis and visualization of software

data to support their understanding and interpretation.

While the use of statistical methods was omnipresent in

data analysis, the scope of such analysis can be enhanced by

the technology CI. In a nutshell, CI [4] is a new paradigm of

knowledge-based processing that dwells on three main

pillars of granular computing [5], neural and fuzzy-neural

networks [4], and evolutionary computing [6–8]. Granular

computing is concerned with a representation of knowledge

in terms of information granules. In particular, fuzzy sets

may be considered as one of the possibilities existing there.

This option is of interest owing to the clearly defined

semantics of fuzzy sets so that they can formalize the

linguistic terms being used by users/designers when

analyzing data [9]. The mechanisms of neurocomputing

establish an adaptive framework of data analysis. Both

supervised and unsupervised learning becomes crucial to

the discovery of dependencies in data. Neural and fuzzy-

neural networks can serve as predictive models. Sensitivity

of the models to multi-dimensionality of input data [10] and

a need for simplify of the models [11] mean that a structural

optimization of models is required. This type of optimiz-

ation is out of reach for standard gradient-based methods.

Evolutionary methods (GAs, GP, evolutionary strategies,

etc.) play a pivotal role there. In summary, CI is a highly

efficient environment of data analysis emphasizing a

number of features: (a) transparency and user friendliness

[11], (b) structural optimization and noninvasive character

of modeling [10]. There are already a number of application

of CI techniques to various sub-domains of software

engineering [12], such as cost estimation, software

reliability [13], software quality [14], requirements and

formal methods [15,16], reverse engineering [17] and

knowledge modeling [18].

In this study, two representative techniques of CI,

namely SOMs and genetic decision trees are used. SOMs

are neural architectures commonly encountered in data

analysis with panoply of various applications, cf. [19,20].

The mechanisms of unsupervised learning are essential in

revealing a structure in the data while maintaining a

minimal level of structural constraints imposed on the

analysis. Here an interactive character of the analysis is

exploited by showing how a designer/user can benefit from

the findings produced by SOM [21]. He/she can easily

change a focus of analysis depending on the results

produced so far and use the findings towards further

refinement of the detailed models. In this sense, SOMs

can be treated here as an introductory vehicle of data

analysis. The essentials of SOMs in terms of the topology of

the neural network, learning and ensuing interpretation are

covered in Appendix A. The second construct is a

genetically developed decision tree that is a detailed

classifier that can support automatic evaluation of software

data and their labeling. It also allows for induction of rules,

which govern relations between different aspects of data.

The tree is constructed by means of GAs and GP that

supports both structural and parametric optimization. The

details of this method are presented in Appendix B. Usage

of evolutionary techniques for synthesis of the trees gives

flexibility in choosing objectives that control a process of

construction of trees. For example, this flexibility can

accommodate any inefficiency in software data such as

unequal representation of different categories, in classifi-

cation problem, of objects in the data.

Described CI techniques are used to analyze software

data collected in an experiment conducted in National

Research Council where a number of software objects were

analyzed and ranked according to their quality. The

objective of the paper is to show usefulness of CI in finding

information hidden in the data. Some of the interesting

aspects of software development that are targeted here are:

† consistency of quality assessment processes;

† attributes of high quality and low quality software

objects;

† influence of number of programmers on software

measures of developed objects;

† differences and similarities of software objects.

Referring to that, several classification tasks such as

classifying software artifacts with respect to quality

assessment, number of developers, and membership of

objects to different packages are described. Visual analysis

of software objects is also presented.

M. Reformat et al. / Information and Software Technology 45 (2003) 405–417406

3. Experimental setting and data sets

An experiment has been performed to generate data

needed for illustration of approach proposed to support

quality analysis. In the experiment, software objects of the

system EvIdentw have been independently analyzed by two

software architects, one involved in the project from the

beginning and the other who joined a development team at

the end of the project. The architects ranked objects

according to their subjective assessment of quality of

objects. Quantitative software measures of these objects

have been compiled. Additional information about number

of programmers involved in development of each object and

membership of objects to different software packages has

been gathered.

3.1. Experimental setting

EvIdentw is a user-friendly, algorithm-rich, graphical

environment for the detection, investigation, and visualiza-

tion of novelty in a set of images as they evolve in time or

frequency. Novelty is identified for a region of interest

(ROI) and its associated characteristics. A ROI is a voxel

grouping whose primary characteristic is its time course,

which represents voxel intensity values over time. Trends

due to motion and instrumentation drift can be detected and

attenuated.

EvIdentw is written in Java and Cþþ and is based upon

VIStA, an application-programming interface (API) devel-

oped at the National Research Council. While Java is a

platform-independent pure object-oriented programming

language that increases programmer productivity [22], it is

computationally inefficient with numerical manipulations of

large multi-dimensional datasets (not only is bounds-

checking performed on Java arrays, they are not based on

a consecutive memory layout visible to the programmer, so

optimizations are difficult to achieve). As a result, all

computationally intensive algorithms are implemented in

Cþþ .

The VIStA API is written in Java and offers a generalized

data model, an extensible algorithm framework, and a suite

of graphical user interface constructs. Using the Java Native

Interface, VIStA allows seamless integration of native

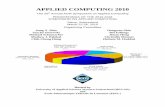

Cþþ algorithms. Fig. 1 shows the class model and

relationships between the three main Java packages used

in VIStA. The core data classes are in the Data Model

package. It contains the IBD_DataArray and IBD_Roi

classes (specialized for contour, polygon, and open point

ROIs), and specialized subclasses and utility classes. The

abstract class, IBD_DataArray, manages N-dimensional

data arrays, though it has been tuned to handle 3D and 4D

arrays since analysis is normally, performed on temporal

data of single slice (3D data), or multi-slice (4D data)

images. The Algorithms package contains classes for

algorithms, parameters, and results from various analyses.

The GUI package is a set of classes for graphical data

representation.

3.2. Data set

All Java-based EvIdentw/VIStA objects have been used

in this study (Cþþ -based objects have been excluded). For

each of the 312 objects, two system architects, A1 and A2,

were asked to independently rank its quality (low, medium,

or high). The architects determined quality of objects based

on their own judgment regarding degree of extensibility,

reusability, efficiency, and clarity of objects. If an object

was assessed to be in need of significant rewriting, then it

received the label, low. If an object was deemed to be so

well written that it would have been included in our coding

standards document, as an example of good programming

practice, then the object received the label, high. A label of

medium was assigned otherwise.

A set of n ¼ 19 software metrics were compiled for each

object. A core set of 15 metrics was initially collected by

automatically parsing the Java EvIdentw source code. Table

1(a) lists the minimum, maximum, and mean for each

object. NM; IC; KIDS; SIBS; and FACE are the respective

number of methods, inner classes, children, siblings, and

implemented interfaces for each object. DI is the object’s

depth of inheritance (including interfaces). The features

rCC and rCR are the respective ratios of lines of code to

lines of comments and overloaded inherited methods to

those that are not. CBO is a measure of coupling between

objects, a count of the number of other objects to which the

corresponding object is coupled.

RFO is a measure of the response set for the object, that

is, the number of methods that can be executed in response

to a message being received by the object. Note that the

standard Java hierarchy was not included in the calculations

of IC, KIDS, SIBS, FACE, DI, and RFO.

Fig. 1. EvIdent software model with VIStA.

M. Reformat et al. / Information and Software Technology 45 (2003) 405–417 407

LCOM; the lack of cohesion in methods, is a measure that

reveals the disparate nature of an object’s methods. If Ij is

the set of instance variables used by method j; and the sets P

and Q are defined as

P ¼ {ðIi; IjÞlIi > Ij} ¼ B Q ¼ {ðIi; IjÞlIi > Ij} – B

then, if cardðPÞ . cardðQÞ; LCOM ¼ cardðPÞ 2 cardðQÞ;

otherwise LCOM ¼ 0 [23].

LOC; TOK; and DEC are the number of lines of code,

tokens, and decisions for an object, respectively. The final

feature WND; is a weighted measure of the number of nested

decisions for an object (the greater the nesting the greater

the associated weight).

There are also four matrices describing each object at the

level of its methods. Mean values of the number of lines of

code, tokens, decisions and nested decisions of all methods

of a single object have been also computed for this

investigation. These are mLOCmthd; mTOKmthd;

mDECmthd; and mWNDmthd; Table 1(b).

Three attributes have been identified as ‘output’

attributes, which are used to determine output categories

of objects:

† Quality Assessed ðQAÞ—represents ranking of the

quality of an object assessed by the architects where 1

means low, 2 means medium and 3 means high;

† Developers ðCOOKÞ—refers to the number of program-

mers that were involved in development of a code for the

object.

† Category ðTYPEÞ—indicates whether the object is a

GUI object—1, a Data Model object—2, or a Utility

object—3.

4. Visual and quantitative examination of software data

The data are first processed using SOMs. A snapshot of

the user interface to the SOM software is included in Fig. 2.

In addition to the essential results produced by the SOMs,

the user is provided with details as to the statistical aspects

of the data such as histogram of the software features,

correlations between them as well as a general summary of

the data (number of data points, number of software

measures). The maps produced through self-organization

are also updated dynamically so the user/data analyst can

monitor a way in which a structure in the data is revealed.

This also helps modify parameters of the map and make

other adjustments so that an extensive ‘what-if’ analysis can

be accomplished.

The map with a number of nodes which does not exceed

number of data points, in our case it is a 16 by 16 map, is

used for the analysis. The data has been linearly normalized.

The learning uses standard Euclidean distance and the map

has been trained over 10,000 learning epochs. The results

are visualized in a series of maps (see Appendix A for more

details on the format of the maps) as shown in Fig. 3.

As it has been underlined, the use of SOMs is highly

developer oriented in the sense that these maps provide the

user with an interactive ‘computer eye’ so that it is easy to

analyze the data, form hypotheses, explore them in a

graphical manner, and refine the findings, if necessary. This

is particularly evident for the clustering map in Fig. 3(a). It

is easily seen that the best modules occupy an upper portion

of the map. Their distribution across the substantial area of

the map indicates that they are diversified in the sense of the

associated values of the software measures. In other words,

the modules fall under the best category and still exhibit a

wide range of the software measures. The worst modules

Fig. 2. Snapshot of the SOM software.

Table 1

Set of core software metrics related to (a) individual objects and (b) object’s

methods

Feature Min Max Mean

(a) Individual objects

NM 0 162 14.4

LOC 0 5669 296.7

TOK 0 26,611 1387.2

DEC 0 582 25.1

WND 0 1061 51.6

IC 0 20 0.7

DI 0 3 0.4

KIDS 0 10 0.3

SIBS 0 16 1.0

FACE 0 5 0.5

rCC 0 348 11.3

rCR 0 2.5 0.1

CBO 0 11 1.0

LCOM 0 9018 193.0

RFO 0 1197 66.1

(b) Object’s methods

mLOCmthd 0 108 15.9

mTOKmthd 0 16,383 1051.2

mDECmthd 0 21 1.6

mWNDmthd 0 91 3.4

M. Reformat et al. / Information and Software Technology 45 (2003) 405–417408

(denoted by ‘1’) arise as a compact region in the map that

stipulates that their software measures assume similar

values. In other words, the worst modules are well

characterized by the software measures. There is also

another region in which the worst and medium quality

software artifacts are mixed up (the region denoted as ‘1-2’).

The region of the medium quality modules (‘2’) positioned

at the left lower corner of the map is also quite compact. The

visual inspection of the regions and their category content

suggests that discriminating the best modules from the rest

could be efficient (as such categories are disjoint in the map)

whereas more concerns can be raised as to the ability of

distinguishing between the worst and intermediate

categories.

The data distribution map (Fig. 3(b)) tells about a fairly

uniform distribution of data. This means that the data

structure that is being revealed is not biased because of

regions of extremely high data density.

Relations between different attributes of software

objects can be visually inspected with the help of weight

maps. Fig. 4 shows two pairs of maps. The first pair,

Fig. 4(a), shows the similarity between measures LOC (line

of codes) and TOK (number of tokens). The second pair,

Fig. 4(b), is an example of dissimilarity of measures. In this

case measures DI (depth of inheritance) and CBO (coupling

between objects) are compared. Presented similarity and

difference are validated by correlation values 0.99 in the

first case and 0.00 in the second. This supports assumption

that visual comparison of distribution maps representing

different attributes is a straightforward and simple way of

determining similarities between attributes.

An interesting comparison can be done between two

output attributes TYPE and COOK. In this case knowledge

can be discovered regarding a relationship between number

of programmers involved in development and types of

developed objects. The weight maps of these attributes are

shown in Fig. 5. As it can be seen the maps are quite

different meaning that there is little similarity between

TYPE and COOK. It can be concluded that there is no

relation between number of programmers and a type of

developed objects. Relatively uniform distribution of

programmers, Fig. 5(b), is observed which means that

none of the types of developed software needed substantial

human recourses.

5. Data-based classification of software objects

The results of a process of constructing classification

trees for the data gathered during the conducted experiment

are presented below.

Fig. 3. Clustering map (a) and data distribution map (b).

Fig. 4. Pairs of weight maps representing attribute similarity (a) and

difference (b).

Fig. 5. Pair of weight maps representing TYPE (a) and COOK (b).

M. Reformat et al. / Information and Software Technology 45 (2003) 405–417 409

5.1. Quality-based classification

A set of intriguing questions arises in the case of

inspection and evaluation of software objects performed by

individuals. In the described experiment two software

architects preformed such a task for more than three

hundred objects. Two main aspects of the assessment

seems to be of special interest:

– construction of a classifier which ‘mimics’ architect;

– extraction of knowledge about an architect—his/her

preferences: it means identification of object attributes,

represented by software measures, which are of the

highest significance for the architect, consistency of a

assessment process.

Both aspects can be addressed by construction of decision

trees. Using the approach of genetic-based synthesis

described in Appendix B such trees have been constructed

for both architects. The process of developing trees for each

architect has been preformed using 10-fold cross validation

approach. The rate of successful classifications for training

data is around 66 and 72% for the first architect and the

second architect, respectively. In the case of testing data the

rates are 55 and 63%. One of the possible conclusions drawn

from the classification rates is related to the consistency of

quality assessment processes preformed by the architects. In

this particular case it seems that the decision tree for the

second architect imitates his evaluation process closely—

his/her judgments seems to be more coherent, therefore

the synthesized tree becomes a better classifier. The best trees

are shown in Fig. 6 for the first architect, and in Fig. 7 for the

second architect. Shaded terminal nodes represent the most

frequently ‘visited’ nodes during a classification process. It

means that the paths from the root to these nodes are the most

important in classification.

It has been stated earlier that if-then rules can be

extracted from the trees. Sets of rules composed out of the

most important paths in the trees (the ones which finish with

shaded nodes, Figs. 6 and 7) are presented in Tables 2 and 3

for the first and the second architects, respectively.

The analysis of the rules reflects preferences of each

architect. In the case of the first architect, it can be

concluded that the important attribute impacting his/her

classification decision is CBO (a measure of coupling

between objects). It seems that this architect has tendency to

favor low coupling objects. In general, he/she prefers

smaller objects—the ones with small values of attributes

LOC (lines of code) and mTOKmthd (mean value of tokens

per method).

Fig. 6. Decision tree for the first architect.

Fig. 7. Decision tree for the second architect.

Table 2

Set of if-then rules for the first architect

if CBO , ¼ 0 & LOC , ¼ 152

then QUALITY is high

if CBO , ¼ 0 & 152 , LOC & mTOKmthd , ¼ 2853

or

if 0 , CBO , ¼ 3 & mTOKmthd , ¼ 2853 & RFO , ¼ 219

then QUALITY is medium

if 3 , COB , ¼ 7 & 152 , LOC & 2853 , mTOKmthd

then QUALITY is low

M. Reformat et al. / Information and Software Technology 45 (2003) 405–417410

In the case of the second architect he/she also prefers

smaller and simple objects. This is represented by presents

of such attributes as LOC (lines of code), mDECmthd (mean

number of decisions per method) as well as CBO (a measure

of coupling between objects) in all decision trees generated

for the second architect.

Interesting comments can be drawn from the compari-

son of these two sets of if-then rules. In the case of a rule

describing high quality objects one can say that the first

architect is very strict regarding coupling between objects

but more forgiving in the case of lines of code. On the

other hand, the second architect is more forgiving in the

case of coupling but more meticulous in the case of lines

of code and, additionally, in the number of decisions per

methods. The rules for medium and low quality objects

are not so easy to compare. In general, the first architect

pays more attention to coupling when the second one puts

more emphasis on lines of code. The fact that classifi-

cation rate for the second architect is higher that for the

first one is reflected in the simpler structures of the tree

and the rules. It is also an indication that perception of

object’s quality of the second architect, who joined a team

later in a development process, has been more related to

the object itself than to relationships between the object

and other objects.

The presented analysis allows for confrontation of the

preferences of architects with company preferences and

company definition of quality.

5.2. Development-based classification

An interesting aspect of software development is related

to relationship between the values of software measures of a

given object and the number of programmers developing

this object. The data collected in the described experiment

are used to construct decision trees where the attribute

COOK defines the output categories. There are three

categories: 1, 2 and 3 indicating the number of programmers

involved in the development of a single object. Only few

objects were developed by three programmers thus the data

set contains a few entries which belong to category 3. In

order to overcome this problem a special approach has been

adopted for construction of decision trees (see Appendix B,

Section 3.2). On average developed decision trees have been

able to properly classified 73% of training cases and 71% of

testing cases. The best tree is presented in Fig. 8.

The attributes that are the most influential are RFO (number

of methods executed in response to a message received by

the object), mWNDmthd (mean value of weighted number

of nested decisions per method), NM (number of methods),

FACE (number of interfaces implemented), and DI (depth

of inheritance). The analysis of the decision tree leads to

some rules which can identify number of programmers

involved in development of a single object. A set of rules is

presented in Table 4. High values of RFO attribute (number

of methods executed in response to a message received by

the object) indicate a higher number of programmers

involved in development of an object. The values of RFO

in the medium range and small number of interfaces

(FACE) point to two programmers. Small values of RFO (a

number of methods executed in response to a message

received by the object) indicate involvement of a single

programmer.

Table 3

Set of if-then rules for the second architect

if LOC , ¼ 120 & CBO , ¼ 1 & mDECmthd , ¼ 3

then QUALITY is high

if 120 , LOC , ¼ 4020 & mDECmthd , ¼ 3

then QUALITY is medium

if 120 , LOC , ¼ 4020 & 4 , mDECmthd & 150 , WND

then QUALITY is low

Fig. 8. Decision tree for the output COOK.

Table 4

Set of if-then rules for the output COOK

if RFO , ¼ 135 & DI , ¼ 2 & mWNDmthd , ¼ 21 & NM , ¼ 48

then ONE COOK

if 135 , RFO , ¼ 397 & FACE , ¼ 4

then TWO COOKS

if 696 , RFO

then THREE COOKS

M. Reformat et al. / Information and Software Technology 45 (2003) 405–417 411

The same tree can be analysed from the point of view of

influence of the number of programmers on values of

software measures of objects. In this case it can be said that

bigger number of programmers leads to higher values of IC

(number of inner classes) and higher values of NM (number

of methods). An interesting observation is related to FACE

(number of interfaces implemented). In general one can say

that a single programmer has tendency to developed objects

with high values of FACE (number of interfaces

implemented).

5.3. Category-based classification

Another analysis of the data collected in the described

experiment is related to categorizing objects as elements of

different software packages. This task is to find software

measures that described each package. ‘Description’ of

packages via the values of different software measures can

increase knowledge of software managers about objects,

which constitute given packages. This knowledge, on the

other hand, can be used to create teams performing different

maintenance activities. Let us assume that some modifi-

cations have to be done to the system module that is related

to GUI, and that it is known that objects of this module have

tendency to be small but with a high degree of coupling. In

such situation a manger can assemble a team of developers

that have experience of working with such objects.

The experiment data set contains information about

membership of an object to a given package—the attribute

TYPE. Three different values of attribute TYPE have been

identified: 1—GUI objects, 2—Data Model objects, and

3—Utility objects. Using the TYPE attribute as the

output, a set of decision trees has been generated. It has

come out that the measures, which occur in almost all

generated tress are NM (number of methods) and FACE

(number of interfaces implemented). The best tree and

splitting of attributes for this tree are shown in Fig. 9. A

classification rate of the tree is 77% for training data and

71% for testing data. The most important rules are shown

in Table 5.

The knowledge about a ‘signature’ of each specific

software package may have impact on a way the workload

inside the team of developers can be distributed at the time

of modifications of software objects in the packages.

6. Conclusions

CI represents a set of new methods and techniques

targeting analysis of quality-based software engineering

data. An example is shown here, where software

engineering data is examined in order to analyze as

well as support a process of quality assessment of

software objects. SOMs have been used for visualization

of software data similarities and/or differences. Decision

trees, constructed using a new evolutionary-based tech-

nique, have been applied to problems of identification of

good/bad software objects and classification of objects

that belong to previously specified groups. Sets of if-then

rules extracted from the data provided additional

information about quality assessment activities performed

by two architects, as well as relationships between

software objects and number of programmers involved

in development of these objects. Relations between

attributes of software objects and different software

packages have been also discovered.

The work presented here can be seen as an introduction

to a process of analysis of software engineering data using

CI techniques. It would be interesting to confront knowl-

edge extracted from the data with evaluation criteria used by

Fig. 9. Decision tree for the output TYPE.

Table 5

Set of if-then rules for output TYPE

if NM , ¼ 3 & mWNDmthd , ¼ 62

or

if 3 , NM , ¼ 92 & mLOCmthd , ¼ 11 & FACE , ¼ 0

then TYPE is GUI

if 3 , NM , ¼ 92 & mLOCmthd , ¼ 11 & 0 , FACE , ¼ 2

then TYPE is DATA MODEL

if 92 , NM , ¼ 128

or

if NM , ¼ 3 & 62 , mWNDmthd

or

if 3 , NM , ¼ 92 & 11 , mLOCmthd , ¼ 84 & 62 , mWNDmthd

then TYPE is UTILITY

M. Reformat et al. / Information and Software Technology 45 (2003) 405–417412

both architects performing quality assessment of objects. An

interesting question is related to possible contributions of

the proposed techniques to quality related techniques and

methods.

Acknowledgements

The authors would like to acknowledge the support of

Alberta Software Engineering Research Consortium

(ASERC) and Natural Sciences and Engineering Research

Council of Canada (NSERC).

Appendix A. Self-organizing map

A.1. Concept

The concept of an SOM has been originally coined by

Kohonen and is currently used as one of the generic neural

tools for structure visualization. SOMs are regarded as

regular neural structures (neural networks) composed of a

rectangular (squared) grid of artificial neurons. The

objective of SOMs is to visualize highly dimensional data

in a low-dimensional structure, usually emerging in the

form of a two- or three-dimensional map. To make this

visualization meaningful, an ultimate requirement is that

such low-dimensional representation of the originally high-

dimensional data has to preserve topological properties of

the data set. In a nutshell, this means that two data points

(patterns) that are close each other in the original highly

dimensional feature space should retain this similarity (or

resemblance) when it comes to their representation (map-

ping) in the reduced, low-dimensional space in which they

need to be visualized. And, reciprocally: two distant patterns

in the original feature space should retain their distant

location in the low-dimensional space. Being more

descriptive, SOM performs as a computer eye that helps

us gain insight into the structure of the data set and observe

relationships occurring between the patterns being orig-

inally located in a highly dimensional space. In this way, we

can confine ourselves to the two-dimensional map that

apparently helps us to witness all essential relationships

between the data as well as dependencies between the

software measures themselves. To discuss the essence of the

architecture, n feature of the patterns (data) are organized in

a vector X of real numbers located in the n-dimensional

space of real numbers, Rn: The SOM comes as a collection

of linear neurons organized in the form of a regular two-

dimensional grid (array), Fig. A1.

Each neuron is equipped with modifiable

connections wði; jÞ where the connections are arranged

into an n-dimensional vector that is wði; jÞ ¼

½w1ði; jÞw2ði; jÞ· · ·wnði; jÞ�: The two indexes (i and j) identify

a location of the neuron on the grid. The neuron calculates

a Euclidean distance ðdÞ between its connections and a

certain input x

yði; jÞ ¼ dðwði; jÞ; xÞ ðA1Þ

The same input x affects all neurons. The neuron with the

shortest distance between the input and the connections

becomes activated to the highest extent and is declared to be

a winning neuron—we also say that it matched the given

input (x). The winning node is defined by the coordinates

ði0; j0Þ such that

ði0; j0Þ ¼ arg minði;jÞdðwði; jÞ; xÞ ðA2Þ

The connections are updated as follows

w_newði0; j0Þ ¼ wði0; j0Þ þ aðx 2 wði0; j0ÞÞ ðA3Þ

where a denotes a learning rate, a . 0: In addition to the

changes of the connections of the winning node (neuron),

we allow this neuron to affect its neighbors (viz. the neurons

located at similar coordinates of the map). The way in which

this influence is quantified is expressed via a neighbor

function Fði; j; i0; j0Þ: In general, this function satisfies two

intuitively appealing conditions: (a) it attains maximum

equal to one for the winning node, i ¼ i0; j ¼ j0 and (b)

when the node is apart from the winning node, the value of

the function gets lower (in other words, the updates are less

vigorous). Evidently, there are also nodes where the

neighbor function zeroes. Considering the above, Eq. (A3)

can be rewritten into the following form

w_newði; jÞ ¼ wði0; j0Þ þ aFði; j; i0; j0Þðx 2 wði; jÞÞ ðA4Þ

Commonly, we use the neighbor function in the form

Fði; j; i0; j0Þ ¼ expð2bðði 2 i0Þ2 þ ðj 2 j0Þ2ÞÞ

with the parameter b (equal to 0.1 or 0.05 depending upon

the series of experiments) modeling the spread of the

neighbor function.

The above update expression (A4) applies to all the nodes

ði; jÞ of the map. As we iterate (update) the connections,

Fig. A1. A basic topology of the SOM constructed as a grid of identical

processing units (neurons).

M. Reformat et al. / Information and Software Technology 45 (2003) 405–417 413

the neighbor function shrinks: at the beginning of updates

we start with a large region of updates and when the learning

settles down, we start reducing the size of the neighborhood.

Overall, the developed SOM is fully characterized by a

matrix of connections of its neurons, that is W ¼ ½wði; jÞ�;

i ¼ 1; 2;…p; j ¼ 1; 2;…p (note that we are now dealing with

a squared p by p grid of the neurons). By a careful

arrangement of the weight matrix into several planes

(arrays) we can produce a variety of important views at

the data. Two concepts such as a weight, region (cluster) and

data density map are considered.

A.2. Weight maps

The weight matrix W can be viewed as a pile of layers of

p by p maps indexed by the variables. We regard W as a

collection of two-dimensional matrices each corresponding

to a certain feature of the pattern, say

½w1ði; jÞ� ½w2ði; jÞ�· · ·½wnði; jÞ�

Each of these matrices contains information about the

weights (or the features of the patterns as the weights tend to

follow the features once the self-organization has been

completed).

The most useful information we can get from these

weight maps deals with an identification of possible

associations between the features. If the two weight maps

are very similar, this implies that the two corresponding

features they represent are highly related. If two weight

maps are very dissimilar, this means the two variables they

represent are not closely interrelated. In addition, we can

also determine the feature association for a data subset. In

the sequel, it may lead to the identification of possible

redundancies of some features (e.g. we state that two

features are redundant if their weight maps are very close

each other).

A.3. Region (clustering) map

A slight transformation (summarization) of the original

map W allows us to visualize homogeneous regions in the

map, viz. the regions in which the data are very similar. This

allows us to form boundaries between such homogeneous

regions of the map. Owing to the character of this

transformation, we will be referring to the resulting areas

as clusters and calling the map a region (or clustering) map.

The calculations leading to the region map are straightfor-

ward: for each location of the map, say ði; jÞ; we assign a

certain level of brightness to each of these results that

produces us a useful vehicle of identifying regions in the

map that are highly homogeneous. Likewise, the entries

with dark color form a boundary between the homogeneous

regions. Clusters can be easily identified by finding bright

areas being surrounded by these dark boundaries. For some

data set, there are distinct clusters, so in the clustering map,

the dark boundaries are clearly visible. There could be cases

where data are inherently scattered, so in the clustering map,

we may not see clear dark boundaries. The clustering map is

an important vehicle for a visual inspection of the structure

in the data. It delivers a strong support for descriptive

modeling: the designer can easily understand how structure

looks like in terms of clusters. In particular, one can analyze

the size of the clusters, their location in the map (that tells

about closeness and possible linkages between the clusters).

By looking at the boundaries between the clusters, the

region map tells us how strongly these clusters are identified

as separate entities distinct from each other. Overall, we can

look at the region map as a granular signature of the data.

These visualization aspects of SOMs underline their

character as a user-friendly vehicle of descriptive data

analysis. In this context, it also points out at the essential

differences between SOMs and other popular clustering

techniques driven by objective function minimization (say

FCM and alike). Note that while FCM solves an interesting

and well-defined optimization problem but does not provide

with the same interactive environment for data analysis

A.4. Data distribution map

The previous maps were formed directly from the

general map W produced through self-organization. It is

advantageous to supplement all these maps with a data

distribution (density) map. This map shows (again on a

certain brightness scale) a distribution of data as they are

allocated to the individual neurons on the map. The data

density map can be used in conjunction with the region map

as it helps us indicate how much patterns are behind the

given cluster. In this sense, we may eventually discard a

certain cluster in our descriptive analysis as not carrying

enough experimental evidence.

Overall, the sequence realized so far can be described as

follows: highly dimensional data ! SOM ! region map,

data distribution maps, and weight maps ! interactive user-

driven descriptive analysis.

Appendix B. Genetic decision trees

B.1. Decision trees—concept

A decision tree is well known technique for representing

the underlining structure of a dataset. It is a model that is

both predictive and descriptive. It is called a decision tree

because the resulting model is presented in the form of a

tree structure. The visual presentation makes the decision

tree model very easy to understand and assimilate. Decision

trees are most commonly used for classification—predicting

what group a case belongs to, but can also be used for

regression—predicting a specific value. A decision tree

consists of a series of nodes. The top node is called the root

node and the tree grows down from the root. Each node

M. Reformat et al. / Information and Software Technology 45 (2003) 405–417414

contains special information about the instances of the data

at that level. When one moves down the tree, nodes are

connected by branches. Each level of the tree splits the data

from the previous level into distinct groups.

In classification problems, decision trees are constructed

based on training data containing sample vectors with one or

several measurement variables (or features) and one

variable, that determines the class of the data sample.

Decision trees are generally learned by means of a top

down growth procedure. At each node, called attribute node,

training data is partitioned to the children nodes using a

splitting rule, Fig. A2. A splitting rule can be of the form: if

V , c then s belongs to L, otherwise to R, where V is

selected variable, c is a constant, s is the data sample and L

and R are the left and right branches of the node. In this case,

splitting is done using one variable and a node has two

branches and thus two children. In general, node can have

more branches and the splitting can be done based on

several variables. The best splitting for each node is

searched based on a ‘purity’ function calculated from the

data. The data is considered to be pure when it contains data

samples from only one class. Most frequently used purity

functions are entropy, gini-index and twoing-rule. The data

portion that falls into each children node is partitioned again

in effort to maximize the purity function. The tree is

constructed until the purity of the data in each leaf node is at

predefined level. Each leaf node is then labeled with a class

determined based on majority rule: node is labeled to the

class to which majority of the training data belongs. Such

nodes are called terminal nodes. One of the problems related

to tree methodology includes choosing the right size of the

tree.

A method of building decision trees based on evolution-

ary computation (EC) is presented. In this approach, a

process of constructing trees, it means defining splitting and

selecting attributes, is governed by an objective function,

which can be arbitrarily specified by a user and can reflect

specifics of data.

B.2. Evolutionary computation

A variety of Evolutionary algorithms (EA), such as GAs

[6,24] and GP [7], have been successfully applied to

numerous problems [8] both at the level of structural and

parametric optimization. EAs are search methods utilizing

the principles of natural selection and genetics [24].

B.2.1. Genetic algorithms

GA [6,24] is one of the most popular methods of

evolutionary computation, Fig. A3. In general, GAs

operate on a set of potential solutions to a given problem.

These solutions are encoded into chromosome-like data

structures named genotypes. A set of genotypes is called a

population. The genotypes are evaluated based on their

ability to solve the problem represented by a fitness

function. This function embraces all requirements imposed

on the solution of the problem. The results of the

evaluation are used in a process of forming a new set of

potential solutions. The choice of individuals to be

reproduced into the next population is performed in a

process called selection. This process is based on the

fitness values assigned to each genotype.

The selection can be performed in numerous ways. One

of them is called stochastic sampling with replacement. In

this method the entire population is mapped onto a roulette

wheel where each individual is represented via the area

corresponding to its fitness. Individuals of an intermediate

population are chosen by repetitive spinning of a roulette

wheel.

Finally, the operations of crossover and mutation are

performed on individuals from intermediate population.

This process leads to the creation of the next population.

Crossover allows an exchange of information among

individual genotypes in the population and provides

innovative capability to the GA. It is applied to the

randomly paired genotypes from the intermediate popu-

lation with the probability pc: The genotypes of each pair are

split into two parts at the crossover point generated at

random, and then recombined into a new pair of genotypes.

Mutation, on the other hand, ensures the diversity needed in

the evolution. In this case all bits in all substrings of

genotypes are altered with the probability pm:

Fig. A2. Simple splitting rule with V as splitting variable.

Fig. A3. Concept of genetic algorithm.

M. Reformat et al. / Information and Software Technology 45 (2003) 405–417 415

B.2.2. Genetic programming

Another EA is GP [7]. GP can be seen as an extension of

genetic paradigm into the area of programs. It means, that

objects, which constitute population, are not fixed-length

strings that encode possible solutions to the given problem,

but they are programs, which ‘are’ the candidate solutions to

the problem. In general, these programs are expressed as

parse trees, rather than as lines of code. The simple program

‘a þ b*c’, for example, would be represented in the

following way:

Such representation of candidate solutions combined

with some constrains regarding their structure allows for

straightforward representation of different structures. Each

structure is evaluated by solving a given problem.

All stages of EAs—selection, crossover and mutation are

applied to structures. Modifications of structures are

performed via manipulations on the lists [7]. This results

in ‘improvement’ of populations from generation to

generation.

In the case of GP, an important aspect is generation of

initial population. All lists representing structures are

generated randomly with constrain regarding the depth.

The same approach is used for mutation operation, where

new substructures and structures are created. In the case of

crossover, there is a procedure in place for checking

correctness of structures.

B.3. Evolutionary development of decision trees

B.3.1. Concept

An explanation of the concept of GA –GP-based

approach to construct decision trees is presented here. It

puts emphasis on targeting two problems simultaneously—

searching for the best split in attribute domains and finding

the best choice of variables for splitting rules. It also

contains a discussion related to different objective functions

controlling the synthesis process.

Combination of GA strength to search through numeric

space and GP strength to search symbolic space resulted in

an approach where a process of construction of decision

trees—defining splitting, selecting variables—can be per-

formed simultaneously and ‘controlled’ by a fitness

function. Such fitness function can represent different

objectives essential for a given classification process. The

idea is to construct a decision tree via performing two-level

optimization: parametric and structural.

A graphical illustration of the approach is shown in

Fig. A4. GA is used at the higher level and its goal is to

search for a best splitting of attribute domains. GA operates

on a population of strings, where each string—GA

chromosome—represents a possible splitting for all attri-

butes. Each GA chromosome is evaluated via fitness

function, which in this case is a GP process. In this case

GP searches for a best decision tree, which can be

constructed for a given splitting. Consequently, GP operates

on a population of lists, which are blueprints of decision

trees, Fig. A5. In other words, each individual of GP

population—a list—represents a single decision tree. A

population of decision trees evolves according to the rules

of selection and genetic operations such as crossover and

mutation. Each individual in the population is evaluated by

means of a certain fitness function. GP process returns the

best value of the fitness function together with the best

decision tree to GA. Returned fitness function value

becomes a fitness value that is assigned to the GA

chromosome. Such approach ensures evaluation of each

GA chromosome based on the best possible decision tree,

which can be constructed for a splitting represented by the

GA chromosome. Such a process, repeated a number of

times, ends up with the best splitting and best decision tree.

B.3.2. Fitness function

One of the most important aspects of the approach is its

flexibility to construct decision tree based on objectives,

Fig. A4. Concept of evolutionary approach to construct decision tree.

Fig. A5. Example of a decision tree represented as a list in genetic

programming.

M. Reformat et al. / Information and Software Technology 45 (2003) 405–417416

which can reflect different requirements regarding classifi-

cation process and different character of data. These

objectives are represented by a fitness function. The role

of the fitness function is to assess how well the decision tree

classifies the training data.

The simplest fitness function represents a single

objective ensuring the highest percentage of classification

without taking into consideration classification rate for each

the data class. In such case the fitness function is as follows:

FitFuna ¼K

N

where N represents a number of data samples in a training

set, and K represents a number of correctly classified data.

Such fitness function gives satisfied results when number of

data samples in each class is comparable.

In many cases the character of processed data is such that

not all classes are represented equally. In this case a fitness

function should be such that it ensures highest possible

classification rate for each class. An example of such fitness

function is presented below

FitFunb ¼Yc

i¼1

ðki þ 1Þ

ni

where c represents number of different classes, ni represents

number of data samples belonging to a class i; and ki is a

number of correctly classified data of a class i:

References

[1] V.R. Basili, L.C. Briand, W. Melo, A validation of object-oriented

design metrics as quality indicators, IEEE Transactions on Software

Engineering 22 (10) (1996) 751–761.

[2] B.A. Kitchenham, R.T. Hughes, S.G. Kinkman, Modeling software

measurement data, IEEE Transactions on Software Engineering 27 (9)

(2001) 788–804.

[3] S.R. Chidamber, C.F. Kemerer, A metrics suite for object-oriented

design, IEEE Transactions on Software Engineering 20 (6) (1994)

476–493.

[4] W. Pedrycz, Computational Intelligence: An Introduction, CRC Press,

Boca Raton, 1997.

[5] W. Pedrycz, Granular computing: an introduction, Proceedings of the

Joint Ninth IFSA World Congress and 20th NAFIPS International

Conference, Vancouver, Canada July (2001) 1349–1354.

[6] D.E. Goldberg, Genetic Algorithms in Search, Optimization, and

Machine Learning, Addison-Wesley, Reading, MA, 1989.

[7] J.R. Koza, Genetic Programming, MIT Press, Cambridge, 1992.

[8] T. Back, D.B. Fogel, Z. Michalewicz (Eds.), Evolutionary Compu-

tations I, Institute of Physics Publishing, Bristol, 2000.

[9] W. Pedrycz, F. Gomide, An Introduction to Fuzzy Sets; Analysis and

Design, MIT Press, Cambridge, 1998.

[10] W. Pedrycz, M. Reformat, Evolutionary optimization of fuzzy

models, in: V. Dimitrov, V. Korotkich (Eds.), Fuzzy Logic: A

Framework for the New Millennium, Studies in Fuzziness and Soft

Computing, vol. 81, Physica-Verlag, 2002, pp. 168–203.

[11] W. Pedrycz, M. Reformat, G. Succi, P. Musilek, Evolutionary

development of transparent models of software measures, First

ASERC Workshop on Quantitative Software Engineering, Banff,

Alberta, Canada February (2001) 49–57.

[12] W. Pedrycz, J.F. Peters (Eds.), Computational Intelligence in

Software Engineering, Advances In Fuzzy Systems—Applications

and Theory, vol. 16, World Scientific, Singapore, 1998.

[13] R.M. Szabo, T.M. Khoshgoftaar, The detection of high risk software

modules in an object-oriented system, in: H. Pham, M.-W. Lu (Eds.),

Proceedings of the Fifth ISSAT International Conference on

Reliability and Quality in Design, Las Vegas, Nevada USA, 1999,

pp. 142–147.

[14] T.M. Khoshgoftaar, E.B. Allen, A practical classification rule for

software quality models, IEEE Transactions on Reliability 49 (2)

(2000).

[15] J. Lee, N.L. Xue, K.H. Hsu, S.J.H. Yang, Modeling imprecise

requirements with fuzzy objects, Information Sciences 118 (1999)

101–119.

[16] J. Lee, J.Y. Kuo, N.L. Xue, A note on current approaches to extending

fuzzy logic to object-oriented modeling, International Journal of

Intelligent Systems 16 (7) (2001) 807–820.

[17] J.-H. Jahnke, W. Schafer, A. Zundorf, Generic fuzzy reasoning nets as

a basis for reverse engineering relational database applications,

European Software Engineering Conference (ESEC97), Springer,

Zuerich, 1997, LNCS 1301.

[18] M. Reformat, W. Pedrycz, N. Pizzi, Building a software experience

factory using granular-based models, Fuzzy Sets and Systems (2003).

[19] T. Kohonen, Self-organized formation of topologically correct feature

maps, Biological Cybernetics 43 (1982) 59–69.

[21] T. Kohonen, Self-organizing Maps, Springer, Berlin, 1995.

[21] W. Pedrycz, G. Succi, M. Reformat, P. Musilek, X. Bai, Expressing

similarity in software engineering, Proceedings of the Second

International Workshop on Soft Computing Applied to Software

Engineering, Enschede, The Netherlands February (2001) 6.

[22] J.F. Peters, W. Pedrycz, Software Engineering: An Engineering

Approach, Wiley, New York, 2000, pp. 505–551.

[23] G. Phipps, Comparing observed bug and productivity rates for Java

and Cþþ , Software Practice and Experience 29 (1999) 345–358.

[24] J.H. Holland, Adaptation in Natural and Artificial Systems, University

of Michigan Press, Ann Arbor, 1975.

M. Reformat et al. / Information and Software Technology 45 (2003) 405–417 417